Here are the top five takeaways from our just-completed survey:

1. The majority of people know about or use one or more AI tools

2. ChatGPT is by far the most popular

3. AI-generated content vs human: does it matter? Yes!

4. Is AI coming after your job? Maybe, but not anytime soon

5. Not everybody needs or wants artificial intelligence in their life

Artificial intelligence, commonly called AI, has taken the world by storm lately. It’s everywhere, from cute little games for kids and cookbooks to cars and heavy industries. But is it really that new?

The simple answer is no; it’s not that new; the way we understand and use it has changed dramatically in the last few years, and that’s why we perceive it as being groundbreaking and “the new cool kid on the block.”

Some sources mark the birth of AI in 1935, while others frame it between 1952-1956. The point is that artificial intelligence goes way back, even though it looked completely different initially.

Recent advancements brought it into the spotlight and changed the game. Still, industries like automotive and construction have been using something similar to what we see today for years. And that’s ignoring the fact that the same applies to things you’re used to every day: social media, customer service, healthcare, and e-commerce.

This is precisely why we wanted to know your opinion about AI, real-world use, and how you see it evolving in the next couple of years. Perception versus reality played an essential role in building this survey, and the results are more than interesting.

Do you know what an AI is?

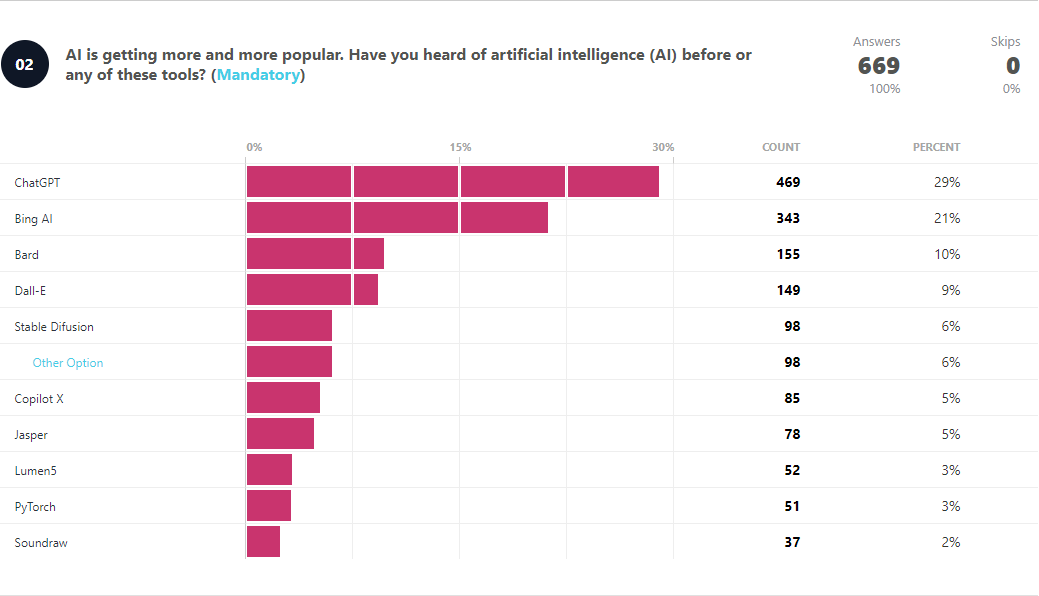

According to our results, the answer is a definitive yes. This is not surprising at all, considering the prevalent race for supremacy between ChatGPT, Bing AI, and Bard AI. These three are also the most known & used AI tools by more than half of our responders.

ChatGPT and Bing AI have a comfortable lead at the moment, with 28% and 20%, respectively, of surveyors knowing what they are. Google’s Bard AI is trailing them, but that’s mainly because it was late to the party, as ChatGPT hit the masses a lot sooner and with a bigger bang.

Another reason for the incredible success of ChatGPT is its usability. The fact that anyone can access it so easily and build email responses, bedtime stories, or even the recipe for a late Sunday brunch makes it the go-to for most. We also did a head-to-head between ChatGPT and Bard AI, and you can check it out to see the results.

But the most interesting takeaway is that almost a quarter of respondents is familiar with more niche tools like Soundraw, PyTorch, Lumen5, Midjourney, and Character.ai. This indicates that people find value in working with an AI tool to improve their daily workflows, no matter the use scenario or the industry.

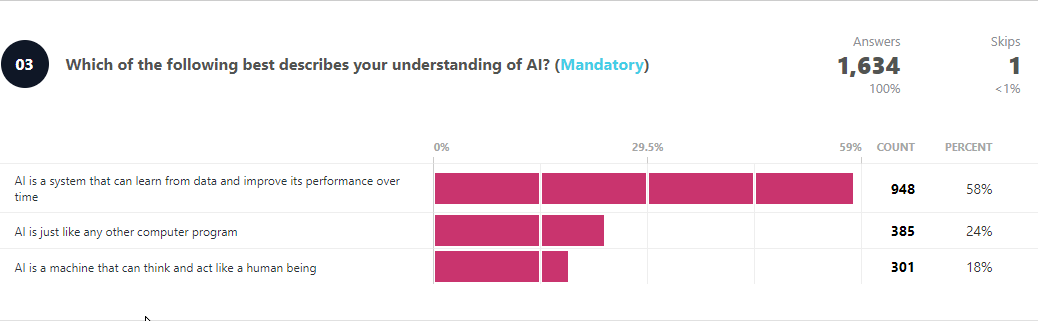

And it’s also a clear indication that most people understand exactly what an AI is and how it works, as nearly 60% of the polled users confirmed.

A bit concerning is that 24% of respondents think of AI as just any other computer program. While there must be a clear definition of what an AI should and shouldn’t be or do, underestimating its use and capabilities could lead to security risks. Check out this detailed perspective to learn more about why artificial intelligence isn’t just another software.

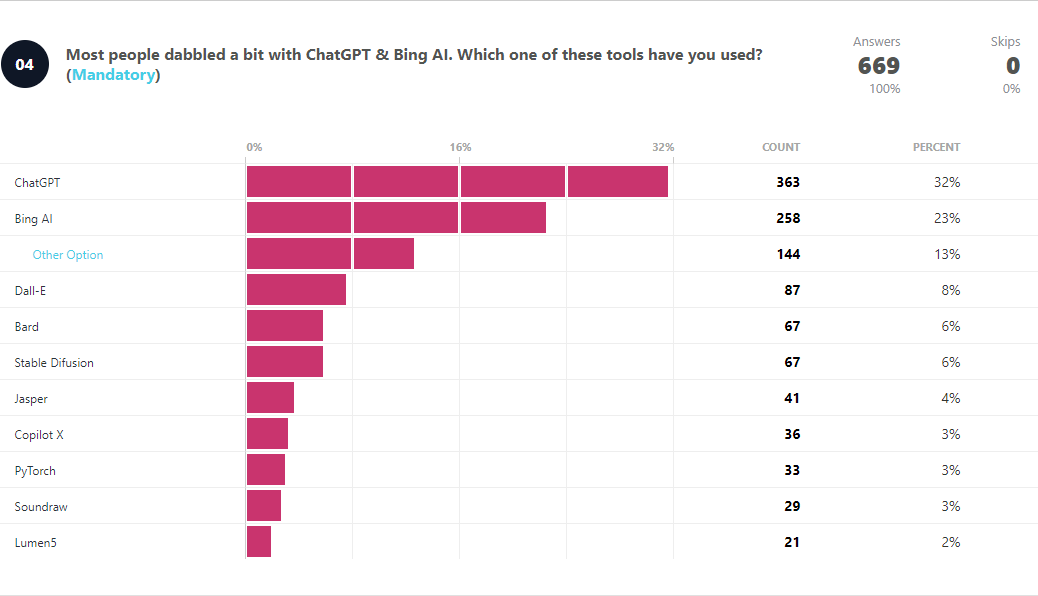

ChatGPT leads the way. Every way

In terms of usage, things start to fall apart for Google, as Bard is used by only 6% of surveyors, surpassed by Dall-E and other not-so-mainstream options like LEX AI, AlphaZero, Artflow.ai, and Dropspace.

Here’s where ChatGPT shows its supremacy, with more than a third of users being comfortable with it. This is also confirmed by its high-speed growing user base, which surpassed 100 million monthly active users and continues to grow.

While there are a lot of alternatives on the market, most people come back to it because of its intelligent integrations with popular tools and the ease of access (Maybe there’s a lesson here for Google?).

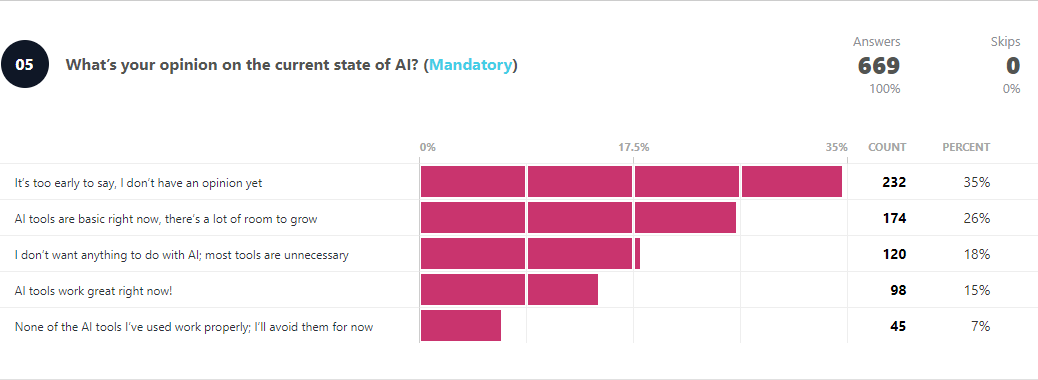

What is the current state of AI in 2023?

If we’re talking about the number of tools and services that are widely available, it’s growing exponentially. More companies adopt artificial intelligence in their workflows, and this is only the beginning.

A third of the respondents don’t have an opinion about the current state of AI, while 26% think that most AI tools are essential right now and need a lot more improvement.

This is especially true in day-to-day tasks, as there are a plethora of browser extensions and software integrations that take advantage of artificial intelligence. Still, user needs and use cases change and evolve every day.

AI technology even at this point is an enabler to so much especially on the internet and is available to everyone, regardless of their choice of use or for what purpose. Without regulations or strict guidelines, it could result to anarchy beyond what the internet and computer technology could control and remedy in time. It is becoming so especially in developing countries with little or no restriction on the use of internet and other technologies that are fueled by AI in our modern day.

Anonymous respondent

With a more complex set of tasks rises the need for a more complex AI that can do much more than just simple chores. So people are asking for it; developers work on delivering it, and the market shifts.

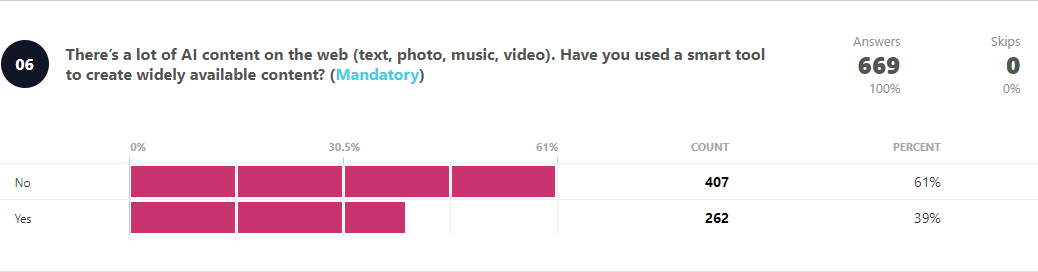

Still, 18% of the repliers want something other than AI. The main reasoning is that human work and input are sufficient, and there’s no need for automation tools. That’s also confirmed by the fact that more than 60% have yet to use AI to create widely available content.

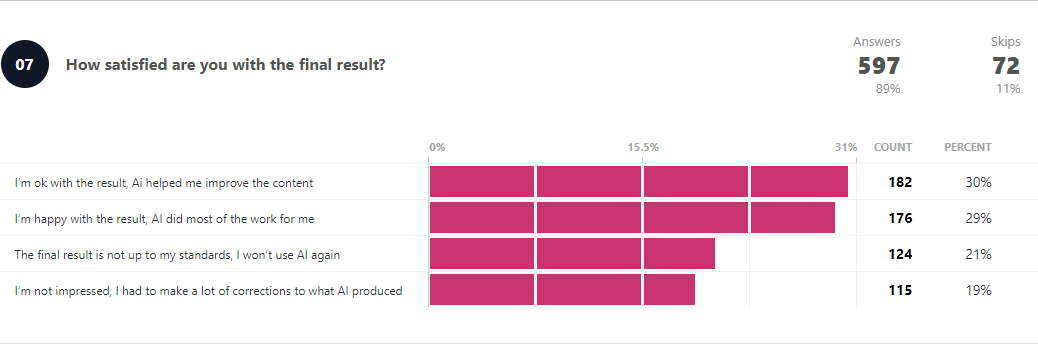

Of the remaining 39% who used tools to improve or optimize content creation, more than half are happy with the results.

This could mean one of two things: AI has become a lot more valuable and easy to use, or how people use it has improved dramatically. Any of the two options further confirms that AI is here to stay! (at least for the foreseeable future)

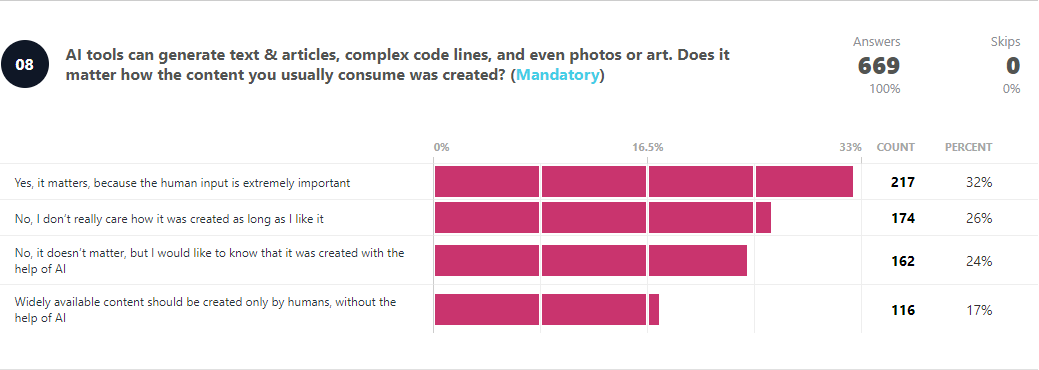

Does it matter how content is created?

AI should be made free and available to all, with the specific purpose of making certain work easier for humans. But not to the extent of relying on AI for content generation and research. All AI content should be identified as such, much like citations and references.

Anonymous respondent

This is a tricky question to answer because it involves a lot of variables and subjectivity. And that’s without using ethics (please find out more about that here). Also, depending on the type of content and consumption (text, audio, video, etc.), things may vary quite a lot.

According to a third of respondents, it matters because human input is a must when it comes to creativity. On the other side, a quarter of them side with preference and don’t care how it was created.

AI-created content should be labeled as such, much as food now tells you if there are GMOs. It is frankly driving me more than a little crazy. It’s making people lazy and encouraging them to just go with the generated content. (How many times do you just go with the suggested word when you ideally wanted a synonym?} There’s a real issue emerging with younger people being substantionally less literate thanks to the nannying.

Anonymous respondent

People are split on this, which is already a big win for artificial intelligence as the uprise of AI-generated content is just starting, with more to come shortly. The best way to go about this is to stay informed, know the pros and cons of AI-generated content, and actively & publicly share your opinion on the matter.

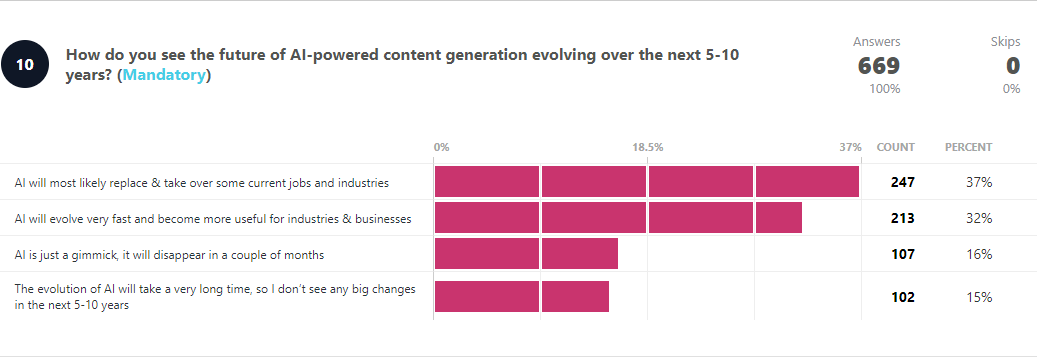

Is AI going to take over in the future?

According to 37% of our surveyors, the answer is yes. At least in some industries. Another 32% stated that AI would become more useful for businesses, most likely as a day-to-day tool. So if you’re using Word, Excel, Photoshop, or any other device daily, imagine the impact and time savings AI tools will bring.

But that’s only one side of the story because 31% of respondents don’t see a future in which artificial intelligence will play a role in our lives (metaverse, anyone?).

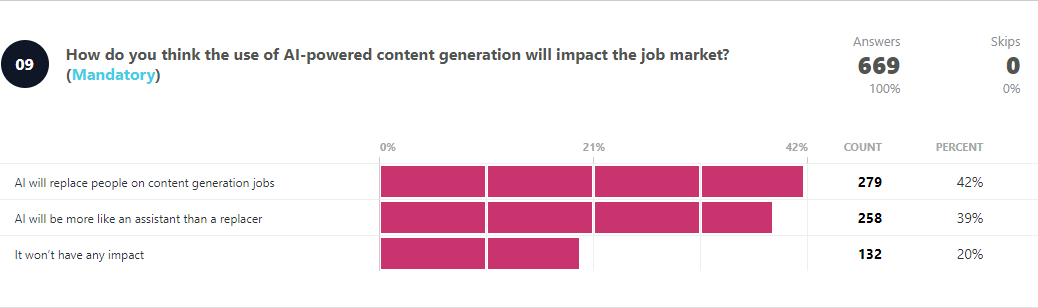

The trend also holds for content generation. This time with an almost perfect 40% split between AI taking over content generation jobs and AI being just an assistant in the same industry.

As a conclusion, we’ve asked our readers if they think there should be regulations or guidelines for the use of AI-powered content generation. Here’s what some of them said:

Regulations are definitely required. They may primarily be based on ethics and protect the interest of genuine content creators. They may also be cognizable and punishable like cyber crime.

Anonymous respondent

There should be regulations for AI as a whole, but I’m not sure about specific to content creation. Content creators DO need to know guidelines if their content is partially generated by AI, but then edited by a human (specific to trademarks or ownership of the content.)

Anonymous respondent

I have the reasoning that AI should yield to a framework of boundaries, to which Human Beings have the opportunity, to be the governor of AI technology and system’s. This must include a provision to abort the manufacture, procurement, implementation, modification(s) and resale of same.

Anonymous respondent

The answers vary, but a common ground seems to be regulations. Most people think that AI needs boundaries, specific rules, and the use of AI-generated content should be flagged accordingly.

What do you think? We’d love to hear your take on this and other topics you’d like us to cover in our following exclusive surveys. Share your thoughts right here, and we’ll check them out!

About the data

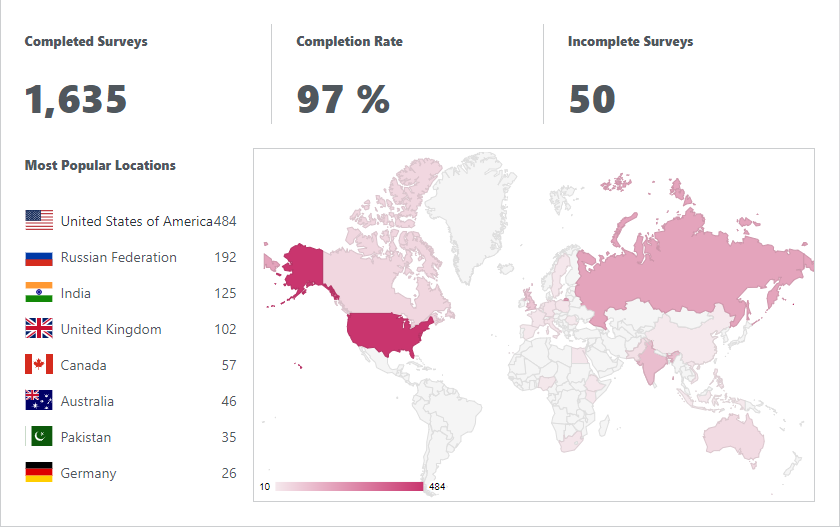

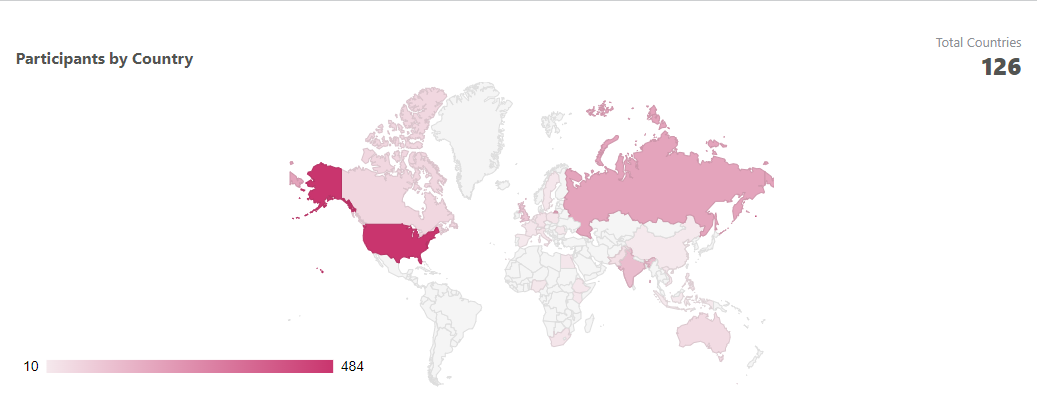

The research above was constructed based on the input of our readers through an online survey that ran for 3 weeks on WindowsReport.com. Using Crowdsignal, a popular survey tool, we have gathered 1635 complete answers to all of our questions.

We’ve received answers from 126 countries, but the most popular locations were:

- United States of America – 36%

- Russian Federation – 14%

- India – 9%

- United Kingdom – 8%

- Canada – 4%

- Australia – 3%

When it comes to platform distribution, readers that have completed this survey are mainly using Windows 10 (42%) and Windows 11 (41%). We’ve also recorded answers from people that are using Windows 7, macOS, iPadOS, Linux, or Chrome OS.

The raw-data used in the research above can be seen and downloaded here.

Source: News Release

Disclaimer

Artificial Intelligence Disclosure & Legal Disclaimer

AI Content Policy.

To provide our readers with timely and comprehensive coverage, South Florida Reporter uses artificial intelligence (AI) to assist in producing certain articles and visual content.

Articles: AI may be used to assist in research, structural drafting, or data analysis. All AI-assisted text is reviewed and edited by our team to ensure accuracy and adherence to our editorial standards.

Images: Any imagery generated or significantly altered by AI is clearly marked with a disclaimer or watermark to distinguish it from traditional photography or editorial illustrations.

General Disclaimer

The information contained in South Florida Reporter is for general information purposes only.

South Florida Reporter assumes no responsibility for errors or omissions in the contents of the Service. In no event shall South Florida Reporter be liable for any special, direct, indirect, consequential, or incidental damages or any damages whatsoever, whether in an action of contract, negligence or other tort, arising out of or in connection with the use of the Service or the contents of the Service.

The Company reserves the right to make additions, deletions, or modifications to the contents of the Service at any time without prior notice. The Company does not warrant that the Service is free of viruses or other harmful components.