E-commerce has always forced brands into a tough choice: spend big on polished product visuals, or settle for something cheaper that doesn’t move the needle. High-end product photography looks great — crisp lighting, professional models, clean backdrops — but it demands real money. Studios, gear, retouching, and scheduling all eat into margins, especially if you’re managing hundreds of SKUs.

Video makes the problem worse. Where a photo shoot might wrap in a day, video production layers on camera movement, audio, multiple takes, and longer post-production timelines. A single product video can run $$2,000$$10,000 when you factor in crew, editing, and revisions. Most brands look at those numbers and just don’t bother — they skip video entirely for anything outside their top sellers.

That math is starting to shift. AI video tools now let brands generate product videos straight from existing images or product page URLs, compressing weeks of production into hours. The real question isn’t whether the technology works — it does. It’s whether the output is good enough to replace or supplement what a crew delivers on set.

Breaking Down the Traditional Production Model

Traditional product video follows a pretty standard playbook. The product gets prepped — unboxed, inspected, arranged so it catches light well. If a model or presenter is involved, there’s a whole casting and scheduling layer. If the concept calls for a specific setting, somebody has to book a studio or lock down a location.

Shoot day means assembling a crew: at minimum, a videographer and a lighting tech. Bigger productions bring in stylists, a director, assistants. The product gets filmed from multiple angles under different lighting setups. Demonstrations get choreographed and rehearsed. Things go wrong, shots get re-done, and the clock keeps running.

Then post-production starts. An editor combs through hours of raw footage, picks the best takes, builds a sequence. Color correction smooths out lighting inconsistencies. Music or voiceover gets layered in. Text overlays and graphics get added. The final cut gets rendered, sent for review, revised, and eventually approved.

For one product, that’s one to two weeks and a few thousand dollars. For a brand dropping 50 new products a quarter, the budget balloons fast. The common compromise? Shoot video for the hero products, leave everything else with static images and hope for the best.

How AI Changes the Production Economics

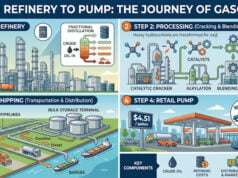

The AI workflow starts with assets most brands already have on hand: product photos and basic product info. Upload an image (or paste a product URL), and the platform analyzes what it’s looking at — identifying the product category, pulling out key features, and figuring out a video structure that makes sense.

From there, it generates motion. Static images get animated with rotations, zoom effects, or scene transitions. Backgrounds get synthesized to place the product in context — a lifestyle setting, a clean studio look, whatever fits. Text overlays call out benefits or specs. If you need a voiceover, AI speech synthesis handles that from a script.

The output is a finished video, usually 15 to 60 seconds, formatted for social or e-commerce. The whole cycle — upload to final render — takes minutes. Cost per video drops from thousands to often under $20, depending on the platform. That efficiency means brands can actually produce video for every single SKU, not just the top sellers. A fashion brand generates videos for every colorway. An electronics company covers every accessory. Coverage goes from selective to comprehensive.

Tools like HappyHorse 1.0 have pushed this further by enabling text-to-video and image-to-video generation with surprisingly natural motion, giving marketers more creative control without needing any production expertise.

The Role of Product Avatars in Bridging the Gap

Early AI product video had a noticeable weakness: no people. Products floated on screen, rotated and zoomed, but nobody held them up, talked about them, or showed how they worked. That made the videos feel cold. Product avatar technology has closed much of that gap — realistic digital humans can now present products, demonstrate usage, and speak about features without a single frame of live footage.

A product avatar holds the item, gestures naturally, and delivers a script with synthesized speech. The result looks close to the user-generated content or influencer review formats that perform well on social platforms. Audiences on TikTok and Instagram expect to see a person, not just a product spinning in space.

The scalability advantage is significant. One avatar, dozens of videos, consistent visual identity, no scheduling conflicts. You can swap scripts for A/B testing without re-shooting. You can customize the avatar’s look to match different target demographics or brand aesthetics.

The trade-off is honesty-dependent. Avatar tech has improved a lot, but some viewers still catch the uncanny valley effect — the motion is almost right, the speech almost natural. In categories like beauty or wellness, where personal authenticity carries weight, a synthetic presenter might undercut trust. For categories where the product demonstration matters more than the personality behind it — tools, gadgets, home goods — the compromise works better.

When Each Method Makes Sense

This isn’t an either/or decision. The right approach depends on what you’re making the video for.

Go traditional when brand perception is the priority. Luxury goods, high-consideration purchases, products where emotional storytelling drives the sale — these benefit from the intentionality and craft of a custom shoot. Same goes for tentpole campaigns, major product launches, and placements on premium channels like TV or high-CPM digital inventory.

Go AI when volume and speed matter more. E-commerce product pages perform measurably better with video — even straightforward video. Performance marketing campaigns need fast creative iteration to test what resonates. Seasonal refreshes and catalog updates become practical when production timelines shrink from weeks to hours.

The smartest brands are running both tracks. Traditional production handles the hero content — the stuff that defines brand tone and shows up in flagship placements. AI handles the long tail: comprehensive catalog coverage, rapid creative testing, always-on content for social feeds.

For the AI-generated work, platforms powered by models like Seedance 2.0 are raising the quality bar by generating more cinematic motion and better visual coherence — narrowing the gap between AI output and traditionally produced footage.

What the Future Workflow Looks Like

The direction is integration, not replacement. Traditional shoots aren’t disappearing — they’re getting more focused on the work that actually needs a human crew and creative direction. AI picks up everything else, and the quality ceiling keeps rising as the models improve.

We’ll likely see more hybrid setups where AI assists traditional production rather than competing with it. AI could draft storyboards, suggest shot compositions, or handle tedious post-production tasks like color correction and caption generation. The creative team focuses on direction and final polish; the machine handles the repetitive technical work.

For most brands right now, the practical move is straightforward: adopt AI video generation for catalog coverage and performance marketing, keep a smaller traditional budget for the content that demands real craft. It’s not about picking a side. It’s about deploying each method where it earns its keep.

Disclaimer

Artificial Intelligence Disclosure & Legal Disclaimer

AI Content Policy.

To provide our readers with timely and comprehensive coverage, South Florida Reporter uses artificial intelligence (AI) to assist in producing certain articles and visual content.

Articles: AI may be used to assist in research, structural drafting, or data analysis. All AI-assisted text is reviewed and edited by our team to ensure accuracy and adherence to our editorial standards.

Images: Any imagery generated or significantly altered by AI is clearly marked with a disclaimer or watermark to distinguish it from traditional photography or editorial illustrations.

General Disclaimer

The information contained in South Florida Reporter is for general information purposes only.

South Florida Reporter assumes no responsibility for errors or omissions in the contents of the Service. In no event shall South Florida Reporter be liable for any special, direct, indirect, consequential, or incidental damages or any damages whatsoever, whether in an action of contract, negligence or other tort, arising out of or in connection with the use of the Service or the contents of the Service.

The Company reserves the right to make additions, deletions, or modifications to the contents of the Service at any time without prior notice. The Company does not warrant that the Service is free of viruses or other harmful components.